Maintenance of Systems’ Validated Status: A Major Challenge for Life Sciences Companies

Life Sciences companies face several challenges in developing and keeping their systems updated. One of the biggest challenges is maintaining the validated state, especially with management software, which means ensuring that the software always works efficiently and reliably. This is essential to ensure the quality and safety of medical products and pharmaceuticals that are produced by these companies.

The goal of validation is to generate documentary evidence to prove that equipment, systems, spreadsheets, processes, and procedures work in a way that protect patient, or consumer, product quality and data integrity. The pandemic accelerated research and development of new therapies and vaccines, which required a greater collaboration and information exchange across the value chain of the medical device and pharmaceutical industries. This was only made possible thanks to advanced digital technologies.

However, this digital transformation, mostly cloud-based, brought a significant increase in the number of systems that have been implemented in recent years and that have received constant updates and improvements in the solutions and technologies involved. Maintaining a validated state has never been more challenging.

To meet these challenges, Life Sciences companies need to have a rigorous change management process in place and, depending on their size and scale, a dedicated team should be considered for maintaining the validated state of systems. It is important that these companies work with trusted and experienced vendors in the pharmaceutical industry to ensure that upgrades and enhancements are carried out efficiently and safely, including best practices.

Deployments of new versions in quality and production environments

Deployment is the term used to indicate the initial installation or deployment action or upgrade of a system. In cloud-based systems, upgrades are generally the scope of the vendor who, if not aligned with pharmaceutical regulatory best practices, may deploy new system versions without warning to the quality and production environments simultaneously.

If they do this simultaneously, there is no time for validation teams to review changes and provide updated documentation to authorize migration of the new version to the production environment.

Release notes

Release notes are documents distributed by vendors with software or hardware products when they are to be released or upgraded. Vendors are expected to include content that can be interpreted by users in any area of expertise that is related to the use of a platform, avoiding overtly technical jargon.

If the user has good release notes, they can analyze if the content of the update will impact the validated state of the system (i.e., if the documentation will remain valid (or not) in case of an update of the system).

Independently of whatever decision, an analysis must be documented using a CC (Change Control). In case a decision is made to adopt a new functionality, a 'mini-validation' must be conducted to study the impact of the change that can be documented in the Functional Risk Analysis and define the scope of the testing.

What is FDA Audit Readiness?

It is a term used for a high standard, quality system that is ready for inspection by the regulatory agency without any major preparations. Now, let’s take for example how to apply this term to a validation study of a management system implemented in a company that regularly applies new business rules to adapt to an increasingly dynamic market. Let's assume that this company has adopted a cloud ERP that is releasing new features and/or updates every two to three months. Imagine the effort to maintain a validated state of a system, including all the others in the company.

For every proposed GxP change (i.e., its impact on best practices), a set of requirements, risk analysis, and additional tests must be performed to support in some way the validation process, taking in consideration the change in question and/or its resulting impact. This generated documentation is usually tied and attached to the Change Control “CC”(not the original validation).

The auditor may question that something has changed and is no longer in the original documentation. It is relatively easy and practical to 'version' some original validation documents to include changes, such as a Validation Plan, URS and Risk Analysis.

Companies usually can't do the same with test scripts, because all their content and respective steps are contained within a single document. When the test scripts are updated (i.e., “saved as”), its unchanged tests are left without any test application in the new version.

This is because most validation professionals end up generating a new test script for each change, altering the status of the original protocol, leaving in place a series of attachments that may become outdated also with the opening of new CC’s.

In short, over time, this kind of validation study results in fragmentation, since no consolidated version containing updated tests were performed in the original validation.

The ideal process would be to have a single OQ (Operational Qualification) test script.

For example, for functions that have never been changed since implementation, they can be combined with changes in a single study showing the traceability and version control of each test, including respective reviews and approvals pre- and post- testing.

Have you ever wondered if there exists a validation management system where one can generate sets of documents per CC or print the entire updated validation study in PDF format with updated items attached to the original study?

The answer is, there does! This function has been designed for you in our GO!FIVE® software.

CLICK HERE to see how an item-based validation approach adds value in maintaining validated status.

Change Control

The first step to begin a change is to open the Change Control document according to the company's procedure. Usually, changes in Computer Systems are also reported in the SOP (Standard Operating Procedure) of CC (Change Control), (i.e., it may not be controlled by a specific CC form).

In any case, content should describe the actions to be taken to execute a change or correction of the system after validation is completed. Critically, the change should determine the extent and verification of activities and documentation required, always based on the Risk Analysis and the complexity of the change to be implemented.

A company's Change Control procedure should always include the management and control of all changes related to validated computerized systems with the objective of ensuring that they remain in that state.

Necessary changes to a validated, computerized system must be approved by a multidisciplinary team involving Quality Assurance. In the case of emergency changes, the changes should always be recorded and reviewed for impact and with Quality Assurance approval. Criteria for considering emergency cases should be described in the company's change control procedures.

Regression Tests

Regression Testing is a technique of applying tests to the latest version of the software that no new defects have appeared in critical, already tested components. If new defects appear in any unchanged components, then the system is considered to have regressed.

When the change is applicable to more than one site/country that is not required for all units, regression testing is also important to verify that there has been no impact on the other sites. Regression Testing for new versions prove that a change hasn’t broken any existing functionality. Change Control should contain the Regression Testing process.

The CC uses the Functional Risk Analysis to define the critical impact the ability of the system to function. Direct regression tests should be done when there is a possibility of indirect impact with “unchanged” critical functions.

Usually, many questions arise about the content of these tests, but basically, it is easier if risks are prioritized as 'high' and 'medium' in the Risk Analysis performed during validation. Some tests must be foreseen as Regression Tests because they are the weakest points in the system.

Thus, a regression test will guarantee the entry of a change into the production environment without impact. This ensures that high and medium risks are under control and demonstrate a consistency in process. There will be no need to completely repeat the system operation qualification step for each time a change is made, which would not be feasible on a daily basis.

CAB (Changes Advisory Board)

For implementation and/or improvement of the change management process, it is recommended that a team be formed to be responsible for approving and managing changes, supporting impact analysis and prioritization.

The team usually includes the following: CAB manager, IT and/or OT (automation) technical experts, users, Engineering, Maintenance, Quality Assurance and/or Validation (GxP).

CAB members are responsible for understanding the needs and impacts of the change from the technical, business, and quality perspectives. It is important to have weekly briefing meetings for decision making issues. CAB members are also responsible for monitoring the development, testing, and approval process for migrating the change from one environment to another.

Change Control by System (High Volume Changes)

CAB members should be involved in changes to profiles, functional, hardware, software, configurations, patches, updates, etc. ITIL (Information Technology Infrastructure Library) is one set of best practices for IT governance.

Depending on the size of the company, some control measures have to be implemented to adequately manage changes that can be very numerous, such as: a.) defining the rule of entry of the change in the committee, only after approval of the cost; and b.) use of the system for managing changes derived from the request for the change until confirmation of its adequate operation.

The system must manage workflow (approval flow) of each functional change and profile that must be controlled minimally by the following information: ID (number to identify the change), sector/area, equipment and/or system, system owner, change content, classification of GxP relevance, document impact, production impact, implementation deadline; and fields for follow-up, complete with date.

Periodic Review

Regardless of how changes are handled, system validations should be reviewed periodically. In general, in this area, we avoid the term 'revalidation', and normally use a Periodic Review activity to verify that the system validation has been updated and, at the end of this task, to check that a system is still in validated status.

The periodic review of computerized systems should be performed with the objective of ensuring that possible changes in processes, system components, or maintenance, for example, have not been impacted with regards to its validated status. A review schedule should be created for all systems.

The frequency of a validation review should be based on the criticality of the system. It is not productive to establish the same periodic review frequency for all systems in the company since some of them are more dynamic and more unstable than others.

It is recommended to use a variable frequency approach for a same system, and even more recommended to define the first periodic review relatively close to the end of a system validation (approximately 6 months), precisely to study the post-implementation stability.

If this first periodic review is conducted successfully, the system will show that it is stable, and that changes, user administration and backups are all up-to-date. A periodic review can be postponed up to one year with frequency increased in the following year. This depends on the result of the last periodic review process.

A review should take into consideration various aspects, such as:

- System validation documentation

- Documentation of the previous review

- Change controls issued

- Deviations that occurred

- Operational procedures

- System access control

- Correct execution of system backup

- Computer system status (e.g., disk space, RAM utilization)

- Status of history files and audit trails.

If any problems are found in the system, an action plan is required to be established.

During evaluation of the validation documentation, it is important to pay special attention to the Functional Risk Analysis and check whether the risk scenarios, classifications, and mitigations, that were required at the time of implementation, are necessary and still in place.

New risk scenarios may arise if changes have not been well controlled.

In this case it is necessary to revise the Risk Analysis and thus proceed to perform a 'mini-validation' looking for new mitigations (controls to lower the new risks), and perform tests to prove that the validation is in accordance with the current process.

A Periodic Review Report should not be finalized until all outstanding issues are resolved.

About the Author

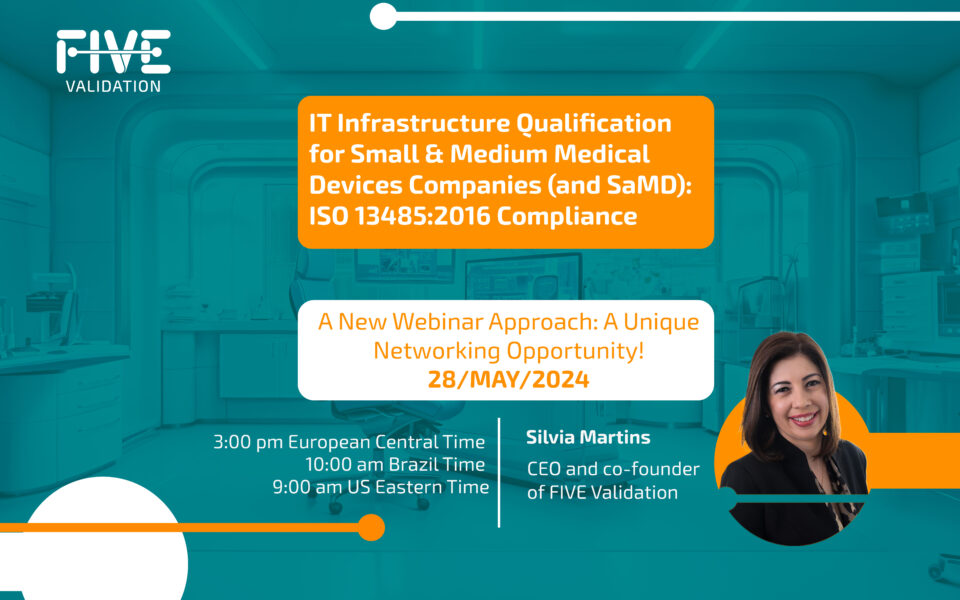

SILVIA MARTINS is an electrical engineer with over 20 years of experience in Systems Validation. She was trained in England in GAMP5 and FDA 21 CFR Part11, in SAP validation in Germany, and in Data Integrity and Data Governance in Denmark. She coordinated the group that elaborated the 1st Guide for Computerized Systems Validation together with ANVISA, the Data Integrity Manual and the Cloud Qualification of Suppliers Manual (both at Sindusfarma). She has also provided training courses for VISA and ANVISA inspectors in Brazil. Currently, she is the CEO and co-founder of FIVE Validation.