Business Intelligence Systems – BI: how and when validating

As we know, nowadays companies need to have a clear data overview to remain competitive, whether for their projections, risk assessment, and/or opportunities.

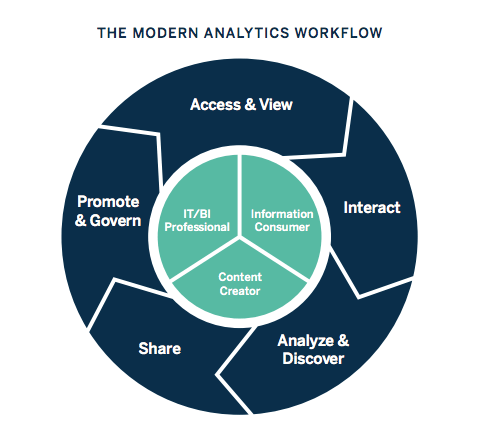

Basically, BI tools combine business analysis, data mining, data visualization, tools, data infrastructure, and recommended practices that help organizations to take decisions based on data.

In general, these tools capture data from other systems and then store and analyze these operations or activities of data. When we have GxP data relevant (data that affect product quality, patient safety, and/or data integrity), in other words, when it can influence a decision-make in GxP processes, there is a need to validate these systems.

It’s important to make sure that the system where the data will be collected is validated, to guarantee that the data will be whole from its origin.

In data mining, this database integration may include statistical analysis and machine learning (ML) to discover trends.

It should be noted that system validation, generally, does not validate the models and algorithms used, but rather the veracity, integrity, and traceability of the data processed in the system.

We highlighted some other items that should be checked within the validation study below:

- Vendor Qualification;

- Integration with other platforms and applications;

- Qualification of the Information Technology infrastructure;

- Data validation;

- If data is entered incorrectly, how will it be corrected?

- Is there data integrity?

- Limitation of processed data;

- Access control;

- Customized reports and calculations.

Even if the tool is only used for trend-checking, and in the case of an investigation, this is handled by analyzing the data from the source system, it’s recommended that the BI tool validation be performed.

In any case, the project of optimizing and facilitating the visualization of important business data through BI tools usually decreases the risk of underestimated data that can be left out.

Overall, this type of tool also considerably reduces the manual labor effort that is more prone to human failure, increasing the area’s productivity.

I hope you enjoyed this article, and if you want to know more or need support in this kind of validation, please contact our experts: [email protected]

Referência:

https://www.tableau.com/learn/articles/business-intelligence